How to Install & Run MiniCPM-o2.6 Multimodal LLM locally

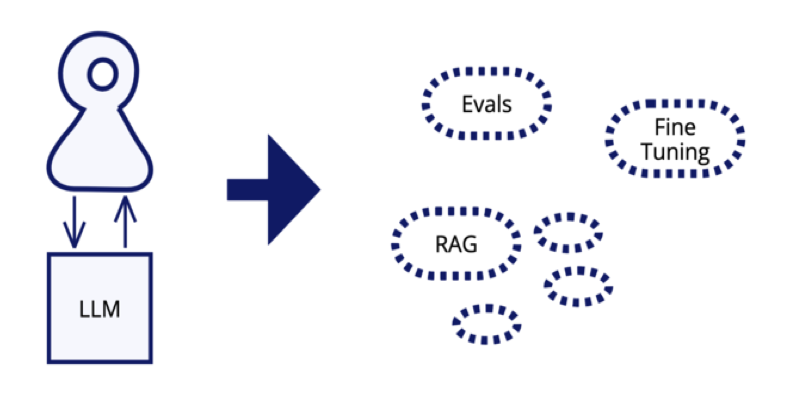

MiniCPM-o2.6 is an advanced multimodal large language model (LLM) with powerful capabilities across various domains, including vision, speech, and text processing. It is built on 8 billion parameters and a robust foundation of models such as SigLip-400M for vision, Whisper-medium-300M for speech recognition, and Qwen2.5-7B for language understanding. MiniCPM-o 2.6 significantly enhances performance compared to its predecessor, MiniCPM-V 2.6, offering impressive features such as real-time speech conversations, multimodal live streaming, and optical character recognition (OCR). Its ability to process high-resolution images, support bilingual speech interactions, and efficiently handle multimodal data streams making it a versatile tool for a wide range of applications, such as content creation to enterprise AI solutions. This article will guide you through the step-by-step process of installing and running MiniCPM-o2.6 on your local machine, along with testing the model on one of the capabilities of video-to-text inferencing. Prerequisites The minimum system requirements for this use case are: GPUs: A100 or RTX 4090 (for smooth execution). Disk Space: 200 GB RAM: At least 16 GB. Jupyter Notebook installed. Note: The prerequisites for this are highly variable across use cases. A high-end configuration could be used for a large-scale deployment. Step-by-step process to install & run MiniCPM-o2.6 MLLM locally For the purpose of this tutorial, we’ll use a GPU-powered Virtual Machine by NodeShift since it provides high compute Virtual Machines at a very affordable cost on a scale that meets GDPR, SOC2, and ISO27001 requirements. Also, it offers an intuitive and user-friendly interface, making it easier for beginners to get started with Cloud deployments. However, feel free to use any cloud provider of your choice and follow the same steps for the rest of the tutorial. Step 1: Setting up a NodeShift Account Visit app.nodeshift.com and create an account by filling in basic details, or continue signing up with your Google/GitHub account. If you already have an account, login straight to your dashboard. Step 2: Create a GPU Node After accessing your account, you should see a dashboard (see image), now: 1) Navigate to the menu on the left side. 2) Click on the GPU Nodes option. 3) Click on Start to start creating your very first GPU node. These GPU nodes are GPU-powered virtual machines by NodeShift. These nodes are highly customizable and let you control different environmental configurations for GPUs ranging from H100s to A100s, CPUs, RAM, and storage, according to your needs. Step 3: Selecting configuration for GPU (model, region, storage) 1) For this tutorial, we’ll be using the RTX 4090 GPU; however, you can choose any GPU of your choice based on your needs. 2) Similarly, we’ll opt for 200GB storage by sliding the bar. You can also select the region where you want your GPU to reside from the available ones. Step 4: Choose GPU Configuration and Authentication method 1) After selecting your required configuration options, you'll see the available VMs in your region and according to (or very close to) your configuration. In our case, we'll choose a 1x RTX 4090 GPU node with 12 vCPUs/96GB RAM/200 GB SSD. 2) Next, you'll need to select an authentication method. Two methods are available: Password and SSH Key. We recommend using SSH keys, as they are a more secure option. To create one, head over to our official documentation. Step 5: Choose an Image The final step would be to choose an image for the VM, which in our case is Jupyter Notebook, where we’ll deploy and run the inference of our model. That's it! You are now ready to deploy the node. Finalize the configuration summary, and if it looks good, click Create to deploy the node. Step 6: Connect to active Compute Node using SSH 1) As soon as you create the node, it will be deployed in a few seconds or a minute. Once deployed, you will see a status Running in green, meaning that our Compute node is ready to use! 2) Once your GPU shows this status, navigate to the three dots on the right and click on Connect with SSH. This will open a new tab with a Jupyter Notebook session in which we can run our model. Step 7: Setting up Python Notebook Start by creating a .ipynb notebook by clicking on Python 3 (ipykernel). Next, If you want to check the GPU details, run the following command in the Jupyter Notebook cell: !nvidia-smi Output: Step 8: Install MiniCPM and its dependencies 1) Clone the MiniCPM official repository. !git clone https://github.com/OpenBMB/MiniCPM-o.git Output: 2) Move inside the project directory and install dependencies. !cd MiniCPM-o && pip install -r requirements_o2.6.txt Output: 3) Install one more external dependency. The flash_attn package is not an internal depe

MiniCPM-o2.6 is an advanced multimodal large language model (LLM) with powerful capabilities across various domains, including vision, speech, and text processing. It is built on 8 billion parameters and a robust foundation of models such as SigLip-400M for vision, Whisper-medium-300M for speech recognition, and Qwen2.5-7B for language understanding. MiniCPM-o 2.6 significantly enhances performance compared to its predecessor, MiniCPM-V 2.6, offering impressive features such as real-time speech conversations, multimodal live streaming, and optical character recognition (OCR). Its ability to process high-resolution images, support bilingual speech interactions, and efficiently handle multimodal data streams making it a versatile tool for a wide range of applications, such as content creation to enterprise AI solutions.

This article will guide you through the step-by-step process of installing and running MiniCPM-o2.6 on your local machine, along with testing the model on one of the capabilities of video-to-text inferencing.

Prerequisites

The minimum system requirements for this use case are:

GPUs: A100 or RTX 4090 (for smooth execution).

Disk Space: 200 GB

RAM: At least 16 GB.

Jupyter Notebook installed.

Note: The prerequisites for this are highly variable across use cases. A high-end configuration could be used for a large-scale deployment.

Step-by-step process to install & run MiniCPM-o2.6 MLLM locally

For the purpose of this tutorial, we’ll use a GPU-powered Virtual Machine by NodeShift since it provides high compute Virtual Machines at a very affordable cost on a scale that meets GDPR, SOC2, and ISO27001 requirements. Also, it offers an intuitive and user-friendly interface, making it easier for beginners to get started with Cloud deployments. However, feel free to use any cloud provider of your choice and follow the same steps for the rest of the tutorial.

Step 1: Setting up a NodeShift Account

Visit app.nodeshift.com and create an account by filling in basic details, or continue signing up with your Google/GitHub account.

If you already have an account, login straight to your dashboard.

Step 2: Create a GPU Node

After accessing your account, you should see a dashboard (see image), now:

1) Navigate to the menu on the left side.

2) Click on the GPU Nodes option.

3) Click on Start to start creating your very first GPU node.

These GPU nodes are GPU-powered virtual machines by NodeShift. These nodes are highly customizable and let you control different environmental configurations for GPUs ranging from H100s to A100s, CPUs, RAM, and storage, according to your needs.

Step 3: Selecting configuration for GPU (model, region, storage)

1) For this tutorial, we’ll be using the RTX 4090 GPU; however, you can choose any GPU of your choice based on your needs.

2) Similarly, we’ll opt for 200GB storage by sliding the bar. You can also select the region where you want your GPU to reside from the available ones.

Step 4: Choose GPU Configuration and Authentication method

1) After selecting your required configuration options, you'll see the available VMs in your region and according to (or very close to) your configuration. In our case, we'll choose a 1x RTX 4090 GPU node with 12 vCPUs/96GB RAM/200 GB SSD.

2) Next, you'll need to select an authentication method. Two methods are available: Password and SSH Key. We recommend using SSH keys, as they are a more secure option. To create one, head over to our official documentation.

Step 5: Choose an Image

The final step would be to choose an image for the VM, which in our case is Jupyter Notebook, where we’ll deploy and run the inference of our model.

That's it! You are now ready to deploy the node. Finalize the configuration summary, and if it looks good, click Create to deploy the node.

Step 6: Connect to active Compute Node using SSH

1) As soon as you create the node, it will be deployed in a few seconds or a minute. Once deployed, you will see a status Running in green, meaning that our Compute node is ready to use!

2) Once your GPU shows this status, navigate to the three dots on the right and click on Connect with SSH. This will open a new tab with a Jupyter Notebook session in which we can run our model.

Step 7: Setting up Python Notebook

Start by creating a .ipynb notebook by clicking on Python 3 (ipykernel).

Next, If you want to check the GPU details, run the following command in the Jupyter Notebook cell:

!nvidia-smi

Output:

Step 8: Install MiniCPM and its dependencies

1) Clone the MiniCPM official repository.

!git clone https://github.com/OpenBMB/MiniCPM-o.git

Output:

2) Move inside the project directory and install dependencies.

!cd MiniCPM-o && pip install -r requirements_o2.6.txt

Output:

3) Install one more external dependency.

The flash_attn package is not an internal dependency, but the model needs this package during installation; otherwise, it may throw an error. Therefore, we'll install it as an external dependency.

!pip install flash_attn

Output:

Step 9: Load and run the model for inference

Once the installation is done, we'll download the model to take the inference.

1) Load the model with the following code snippet.

import torch

from PIL import Image

from transformers import AutoModel, AutoTokenizer

model = AutoModel.from_pretrained(

'openbmb/MiniCPM-o-2_6',

trust_remote_code=True,

attn_implementation='sdpa', # sdpa or flash_attention_2

torch_dtype=torch.bfloat16,

init_vision=True,

init_audio=True,

init_tts=True

)

model = model.eval().cuda()

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-o-2_6', trust_remote_code=True)

model.init_tts()

model.tts.float()

Output:

Depending on your GPU configuration, it may take some time to download. Once the model is loaded successfully, we can move on to the inference part.

2) Run the model.

Although the model supports multimodal inference, however for the scope of this tutorial, we'll test one of its capabilities, that is, video-to-text conversion.

import math

import numpy as np

from PIL import Image

from moviepy import VideoFileClip

import tempfile

import librosa

import soundfile as sf

from decord import VideoReader, cpu

MAX_NUM_FRAMES=64 # if cuda OOM set a smaller number

def encode_video(video_path):

def uniform_sample(l, n):

gap = len(l) / n

idxs = [int(i * gap + gap / 2) for i in range(n)]

return [l[i] for i in idxs]

vr = VideoReader(video_path, ctx=cpu(0))

sample_fps = round(vr.get_avg_fps() / 1) # FPS

frame_idx = [i for i in range(0, len(vr), sample_fps)]

if len(frame_idx) > MAX_NUM_FRAMES:

frame_idx = uniform_sample(frame_idx, MAX_NUM_FRAMES)

frames = vr.get_batch(frame_idx).asnumpy()

frames = [Image.fromarray(v.astype('uint8')) for v in frames]

print('num frames:', len(frames))

return frames

video_path ="test_video.mp4"

frames = encode_video(video_path)

question = "Describe the video"

msgs = [

{'role': 'user', 'content': frames + [question]},

]

# Set decode params for video

params={}

params["use_image_id"] = False

params["max_slice_nums"] = 2 # use 1 if cuda OOM and video resolution > 448*448

answer = model.chat(

msgs=msgs,

tokenizer=tokenizer,

**params

)

print(answer)

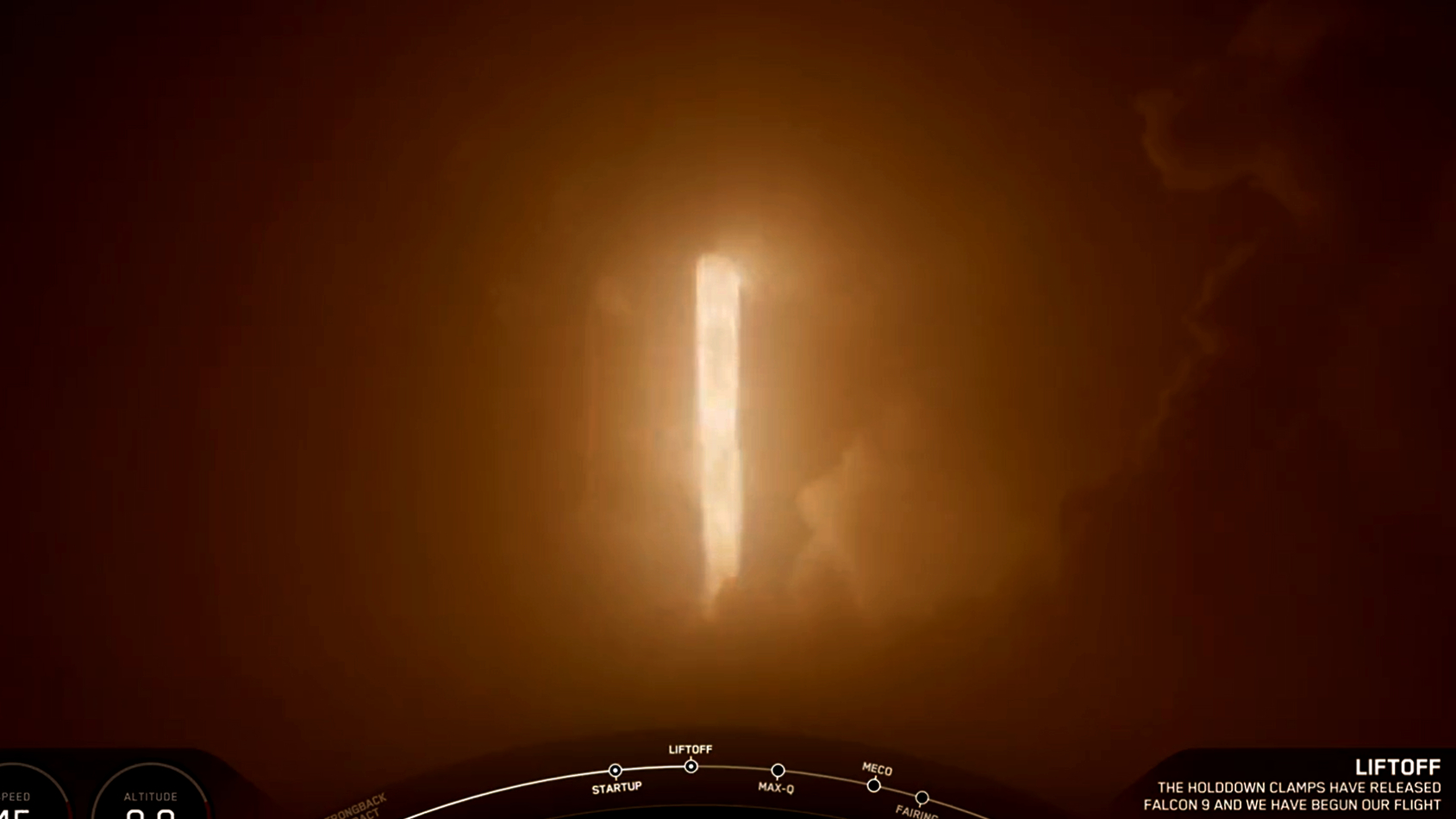

In the code above, we have downloaded the following video in the project directory with the file name "test_video.mp4" to give to our model, along with a prompt:

Prompt: "Describe the video"

Video: https://www.pexels.com/video/teacher-giving-test-results-to-his-students-7092235/

Here's the output generated by the model:

As you may see in the output above, the model has nicely put in the description of the video, along with mentioning small details and the overall sense of the video.

Conclusion

By following the steps outlined in this guide, you can unlock the full potential of MiniCPM-o 2.6 and integrate its advanced capabilities into your workflows with ease. Additionally, NodeShift provides an optimized deployment environment that enhances the model's efficiency, scalability, and performance, ensuring a seamless experience for the users across various domains.

For more information about NodeShift:

![[DEALS] iScanner App: Lifetime Subscription (79% off) & Other Deals Up To 98% Off – Offers End Soon!](https://www.javacodegeeks.com/wp-content/uploads/2012/12/jcg-logo.jpg)